VideoGameBench

Alex L. Zhang, Thomas L. Griffiths, Karthik R. Narasimhan, Ofir Press · Princeton University

Results

| MODEL | OVERALL SCORE | CIVILIZATION I | THE NEED FOR SPEED | THE INCREDIBLE MACHINE | POKEMON CRYSTAL | DOOM II | KIRBY'S DREAM LAND (DX) | LINK'S AWAKENING (DX) | SECRET GAME #1 | SECRET GAME #2 | SECRET GAME #3 |

|---|---|---|---|---|---|---|---|---|---|---|---|

VG-Agent + Claude Opus 4.6 (claude-opus-4-6) | 4.6% | 25% | 0% | 0% | 7% | 0% | 0% | 0% | 27% | 0% | 0% |

VG-Agent + Gemini 3.1 Pro Preview (gemini-3.1-pro-preview) | 4.6% | 25% | 0% | 0% | 0% | 0% | 0% | 0% | 36% | 0% | 0% |

VG-Agent + GPT 5.4 (gpt-5.4) | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% |

Full = real-time play; Lite = game pauses while the model thinks.

About the benchmark

VideoGameBench is a benchmark composed of a diverse suite of 23 curated video games split across a dev and test set, with an environment to evaluate and communicate with VLM-based agents. The task is to solve the core objective of each game, e.g. defeating the final boss in Super Mario Land or completing the entire single-player campaign for Age of Empires.

The benchmark gathers gameplay scenarios from iconic titles spanning multiple genres and decades. Each task requires models to understand visual game states, interpret objectives, and make strategic decisions to progress through levels or achieve specific goals. VideoGameBench challenges models to complete entire games using only raw visual inputs and a high-level description of objectives and controls, a significant departure from existing setups that rely on game-specific scaffolding and auxiliary information.

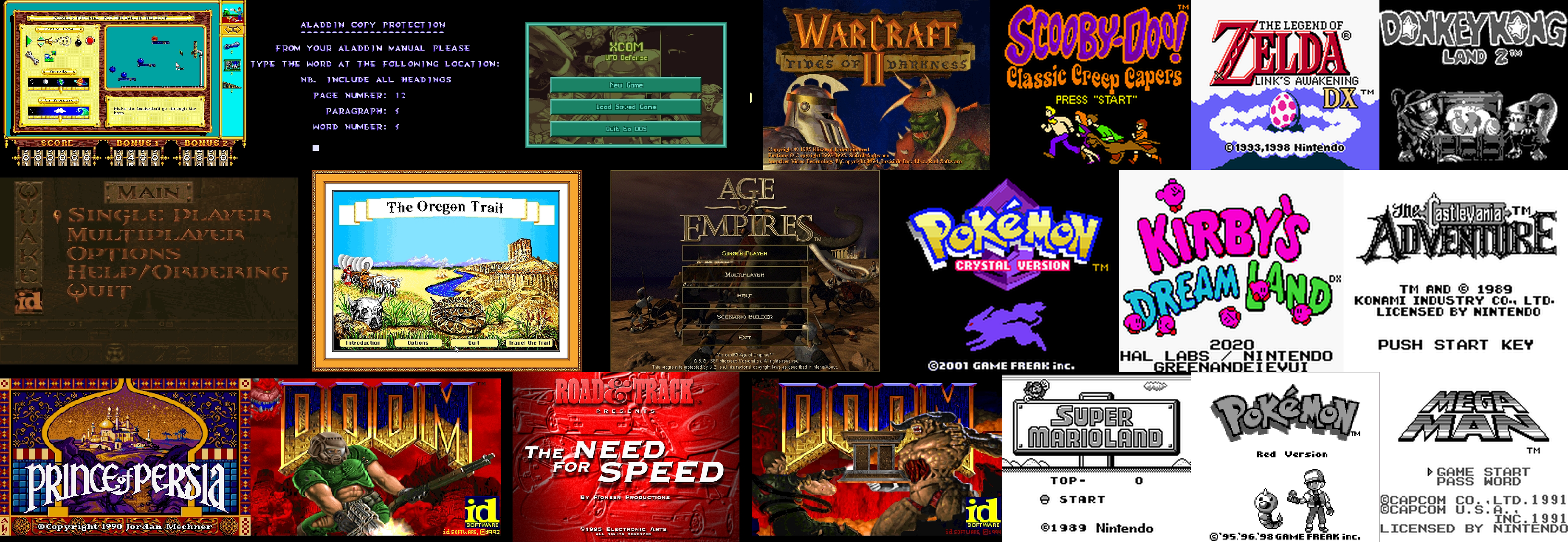

The list of public games on VideoGameBench, spanning 20 different games on MS-DOS and Game Boy consoles.

A major bottleneck in playing games with VLMs is the high inference time required to produce each action. To address this, VideoGameBench also includes a Lite setting, in which the game environment pauses while waiting for the model's next action.

VideoGameBench represents a novel approach to evaluating AI systems on tasks that require both visual intelligence and strategic thinking, providing insights into how well current models can understand and interact with complex interactive environments.

VG-Agent on VideoGameBench

Examples of VLM agents playing games on VideoGameBench. These clips demonstrate both the capabilities and current limitations of state-of-the-art vision-language models in real-time gaming.

Example 1. Gemini 3.1 Pro Preview plays Kirby's Dream Land, clearing Green Greens by defeating Whispy Woods, progressing through Castle Lololo, and entering Bubbly Clouds. The agent demonstrates real platforming competence but eventually gets stuck in a door-landing loop, unable to resolve the UP-to-enter-door vs UP-to-fly ambiguity.

Example 2. Gemini 3.1 Pro Preview plays Civilization I, founding Rome and exploring extensively, reaching approximately 2093 AD with active diplomacy and wars against Mongols. The agent managed tech exchanges, city crises, and strategic decisions, though it periodically got caught in end-of-turn loops before breaking out with unit movement.

Example 3. Gemini 3.1 Pro Preview plays The Legend of Zelda: Link's Awakening, getting the shield from Tarin, exiting the house, and exploring Mabe Village extensively. The agent wanders through the village searching for the south exit to Toronbo Shores, only reaching the boundary near the very end. No model reached the sword across any run, highlighting challenges in spatial navigation and pathfinding.

Example 4. Claude Opus 4.6 attempts The Incredible Machine, trying the widest variety of interactions including Enter, Space, Escape, right-click, double-click, and dozens of coordinate pairs. Despite creative attempts, no interaction registered with the DOS emulator. All three models failed to clear even Tutorial Puzzle 1, highlighting fundamental challenges with drag-and-drop interactions.

Example 5. GPT 5.4 plays Pokemon Crystal, spending the entire run stuck in the starting bedroom, unable to find the staircase to go downstairs. The agent cycles through directional inputs trying to navigate out but never leaves the first room, highlighting fundamental challenges with spatial navigation in RPG environments.

Example 6. Claude Opus 4.6 plays Doom II on VideoGameBench, demonstrating combat competence and genuine progression through the game. The agent eventually gets stuck in navigation loops due to keyboard-only turning causing heading drift, repeatedly bumping against walls and failing to re-orient.

Current state-of-the-art VLMs exhibit varying degrees of competency across different game genres. While they can perform basic interactions such as movement, menu navigation, and simple combat, they consistently struggle with higher-order cognitive tasks including long-term planning, spatial reasoning, and goal persistence.